Two documentaries about technology premiered at Sundance 2020: Shalini Kantayya’s ‘Coded Bias,’ and Jeff Orlowski’s ‘The Social Dilemma’. Devika Girish of Film Comment reviewed both of them for The New York Times. What struck me most was how she ended the review of ‘Dilemma’. She said that the film was streaming on Netflix, “where it’ll become another node in the service’s data-based algorithm”. On 5 April 2021, months after ‘Dilemma’ released in October 2020, ‘Bias’ released on Netflix.

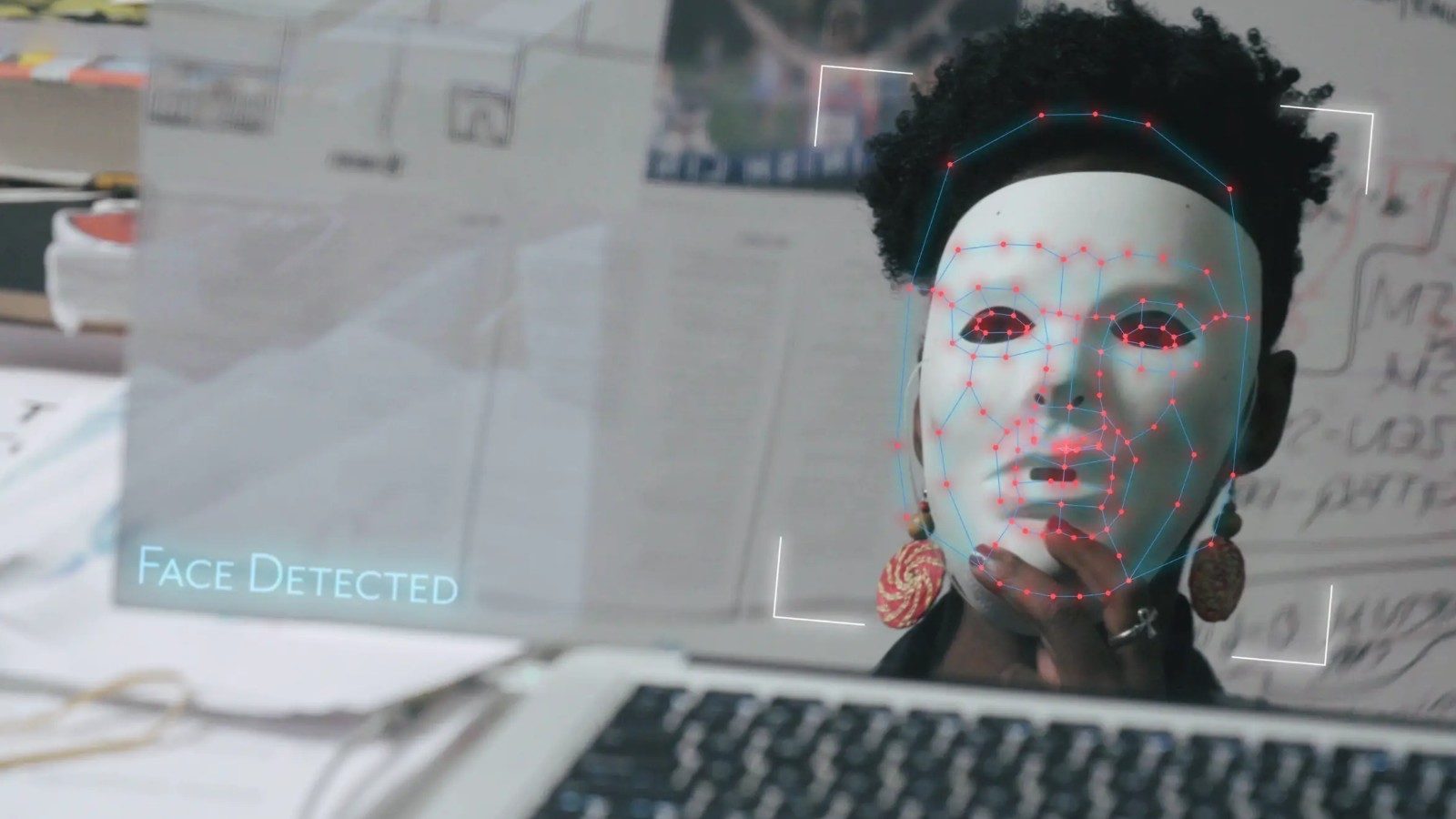

Kantayya’s is a better film because it has a clear angle. The synopsis on her website asks: “What does it mean when artificial intelligence (AI) increasingly governs our liberties?” The website asks what the consequences are for people against whom the consequences are biased. Wait a moment, how can AI be biased? And that’s where Kantayya’s film takes you through the experiences of Joy Buolamwini, an MIT Media laboratory researcher who discovers that a lot of facial recognition software do not detect darker-skinned faces or the faces of women. She investigates the algorithms.

Related to Coded Bias – 10 Best Movies on Crisis-Ridden Facial Surgery

Her journey, thankfully, leads to the push for the first-ever legislation in the US to govern against bias in the algorithms that impacts them all. Cathy O’Neil, who appeared in ‘Dilemma’ appears here, too. There’s Ravi Naik, Safiya Umoja Noble, Zeynep Tufekci, Virginia Eubanks, Sikie Carlo, Meredith Broussard, among others.

Basically, when Buolamwini puts on a white mask, the computer detects a face; when she doesn’t, it simply doesn’t. When she looked at the data sets, they contained men and a majority of light-skinned faces. There are examples of China and the UK in the film. Amy Webb says, “there are currently nine companies that are building the future of artificial intelligence, six are in the United States and three are in China.” Facebook, Apple, Amazon, Microsoft, Google, and IBM; Alibaba, Tencent, and something that has a logo of paws.

The film doesn’t just have the most basic impact in raising awareness; it goes a step further with its website that offers you its ‘Take Action’ option offering you an activist toolkit, signing a declaration, discussion guide, and even hosting a screening. Kantayya does fall into the trap of centering the entire story around Buolamwini. People who have not watched the film but have seen the hearing between Buolamwini and Alexandria Ocasio-Cortez (AOC) know how the entire story unfolds. There is joy in watching Buolamwini’s journey.

The personal stories and joys of Black women are poignant enough to watch. A part of me kept asking why these people want to be under surveillance. However, Buolamwini answers somewhere in the film that there are so many wrongful imprisonments that you would rather identify the person that you are behind accurately than do nothing at all. It talks about the things we take for granted.

Buolamwini talks to a number of Black women who identify with the issue. It talks about police who have knocked on their doors, misidentifying them and holding them accountable for a crime they did not commit. As Buolamwini’s hearing with AOC was televised, they watched her on telly. They root for her, cheer for her, and pray for this laboratory researcher to set something right in the world.

Related to Code Bias – 10 Best Netflix Original Movies of 2020

Introduced to the world in the 21st century the technology was only used but not governed. There is no policy. India uses technology to identify a trail of WhatsApp chats to track celebrities, who may have consumed cannabis. This case, by the way, took less than a week, covered by news channels and the police. Whilst, it took the police months to identify who shot Gauri Lankesh through security and facial recognition software. The government, now, has our fingerprints and retina in the name of Aadhar.

Coming back to technology, nobody is registering or taking cases against Twitter trolls or threats seriously. There’s no policy on internet use and this is 20 years down the line. The way we use technology is only becoming more dependent and less governed with time.

Children who are less than three year old have their education, entertainment, and familial connections through technology. They know how to use gadgets before they know how to read or write. Digital literacy, one of the 21st-century skills has taken over basic literacy skills and I do not think people have had the time to fully investigate the pros and cons. They just adapt to it.

China’s social security system is based on a points system where relationships are formed with a higher score. So, people are keen on keeping it high; the film tells me. In the UK, the issues are the same as the US where it still feels like Steve McQueen’s ‘Small Axe‘ anthology is not a period film but the contemporary present. People are misidentified for no reason and crusaders had to rely on a helpful and kind white woman. The latter is a member of the legislative assembly and she can only make some phone calls to set the ball rolling. Meanwhile, What about the trauma that these wrongfully accused go through? The wait is endless but the consequences are for life, and for what? Because your technology did not bother to collect enough Black samples!

Preach all I may, I am a part of five of the nine companies building the future of AI which only makes me a hypocrite in attempting to review this. Kantayya’s film despite its few limitations makes a strong case and comes at you with full force. Ignore this biased review and try it on that other algorithm that may not recommend this film but key in the words ‘Coded Bias’ and you should be able to watch it.

Trailer

Coded Bias is now streaming on Netflix

![Maze Runner: The Scorch Trials (2015) Movie Review: Not as W[i]CK[e]D as it seems!](https://www.highonfilms.com/wp-content/uploads/2015/09/Hypable-First-look-at-The-Maze-Runner-sequel-The-Scorch-Trials-888x456.jpg)