The act of judging — of assigning value to someone or something based on performance — is probably as old as humanity itself. You can safely assume that even cavemen were sizing each other up: Who hunts better? Who builds the sturdier shelter? Who’s pulling their weight?

Formalized systems came much later. The Roman Empire famously popularized the thumbs up/thumbs down gesture during gladiatorial games — a blunt but effective metric. By the 18th century, academic institutions began standardizing numerical grading systems. The 19th century introduced letter grades. And by the early 20th century, film criticism had entered the chat, with newspapers like the New York Daily publishing some of the earliest recorded movie grades (at least according to a quick Google dive — so take that with a grain of salt).

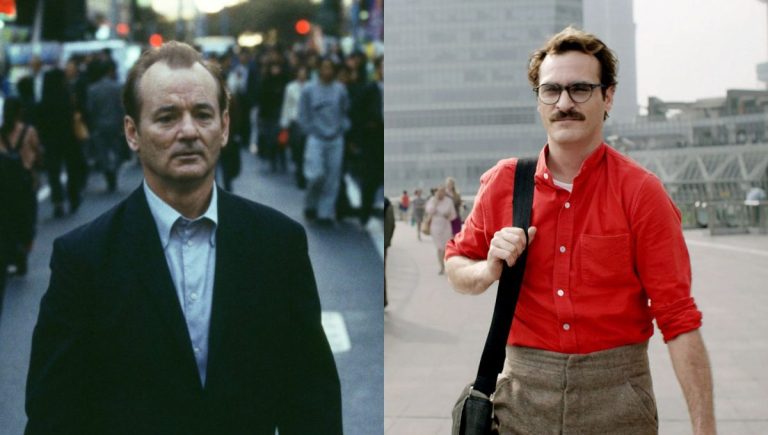

Fast forward to the 1970s, and modern film criticism as we know it began to crystallize. Roger Ebert popularized the four-star system, while he and Gene Siskel turned the thumbs up/thumbs down into a cultural mainstay on their television show — perhaps subconsciously echoing those ancient Roman gestures.

Now, I could theoretically try to confirm whether the Roman inspiration was intentional. But seeing as both critics have passed on, the only way to do that would involve a séance — and if horror movies have taught us anything, that never ends well. Sure, some people claim they’ve used an Ouija board, and nothing happened. Good for them. With my luck, I’d end up summoning Pazuzu, Candyman, a Djinn, and Satan all at once. So that’s a hard pass.

Jokes aside, in the past decade — arguably since the moment movie ratings were invented — people have increasingly questioned their value in entertainment and beyond. Albums, films, TV shows, books: every score feels like a potential battleground. (I don’t spend much time in Goodreads comment sections, but I can only imagine.)

But where did it all probably begin?

The Rotten Tomatoes Effect

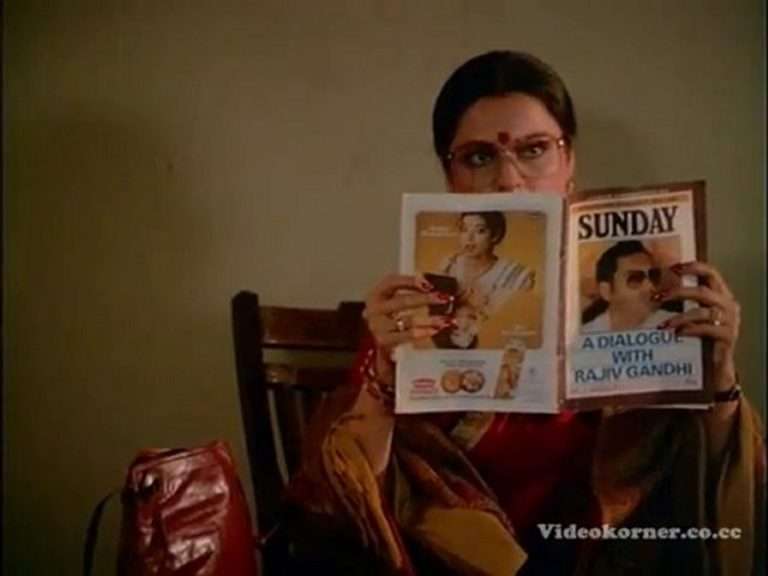

I still remember the first time I heard about Rotten Tomatoes. It was on a radio show I used to catch after school called La Hora Señalada (the Spanish title for “High Noon”), where two veteran critics would break down new releases and revisit older classics. Before every discussion, they’d reference “the Rotten Tomatoes score,” like it was some cinematic barometer of truth.

I didn’t actually visit the site back then. Internet access at home was spotty — dial-up at best, nonexistent at worst — and not exactly a priority when my family had bigger concerns. But even without browsing it myself, I grew up watching cinephiles treat the Tomatometer like gospel. A high percentage meant “good.” A low one meant “bad.” Simple as that.

Over the past decade, that perception seems to have intensified. The site has been around since 1998, but the explosion of high-speed internet, social media platforms like Twitter and Facebook, and the rise of online fandom culture amplified its influence. Suddenly, that big red or green number wasn’t just a reference point — it became ammunition in arguments.

So, how much should we actually care about it?

The answer isn’t straightforward.

First, it’s important to understand what that percentage represents. The Tomatometer isn’t an average movie rating — it’s the percentage of critics who gave the film a “fresh” (positive) review. That means a movie sitting at 80% doesn’t necessarily have critics raving about it. Many of those positive reviews could be modest 7/10s or 3.5/5s. The more telling metric is the smaller average rating number listed beneath the percentage — but let’s be honest, most people fixate on the big, bold score.

Filmmakers have criticized the site for oversimplifying complex critical opinions into a binary fresh/rotten system. And that critique isn’t entirely unfair. When nuanced reviews get distilled into a single color-coded badge, context gets lost.

Then there’s the audience score — which, at least historically, has been vulnerable to manipulation. The most infamous example came during the release of “Captain Marvel,” when organized groups review-bombed the film largely due to backlash against Brie Larson. The score plummeted before most people had even seen the movie. To their credit, Rotten Tomatoes implemented changes afterward to curb that kind of coordinated sabotage. Of course, the opposite phenomenon exists too: fans artificially inflating scores for films they love.

All of this reinforces one simple idea: the site is a reference point, not a verdict.

It can be useful — a quick snapshot of critical consensus — but it shouldn’t live on a pedestal. It can mislead. It can misrepresent nuance. And it absolutely may not reflect your own taste. There are plenty of low-rated films I adore. “Max Keeble’s Big Move” sits at 27%, and I’ll defend that gem every, any, what, where, why, when, and however time.

Another factor people rarely consider: critics are individuals with specific tastes. If a horror skeptic reviews a slasher or a rom-com enthusiast tackles an austere arthouse drama, their reaction may not align with your own sensibilities. That doesn’t make them wrong — it just means taste is subjective.

I believe the healthiest approach is to treat Rotten Tomatoes as a starting point. Read individual reviews. Seek out critics whose tastes align with yours. Cross-reference with other aggregators like Metacritic, which uses a weighted average system rather than a binary model. (Full disclosure: I haven’t relied on it heavily myself, but many cinephiles prefer its methodology.)

In the end, no percentage can replace your own experience. The most reliable metric will always be the one you assign after the credits roll.

Also Related to Movie Rating Dilemma: The Death of the Opening Weekend: What Actually Defines Success in Film Now

The Value

In preparation for this article, I ran a small poll — and the results were both surprising and completely predictable. Much like politics (and, frankly, everything else these days), people are deeply divided on how much value they place on ratings. What caught me off guard, though, was that after hundreds of votes, the majority leaned toward the “don’t care” camp.

That lines up with a noticeable trend on platforms like Letterboxd, where more and more users are ditching the traditional star system in favor of a simple “heart” — or nothing at all.

So why is that happening?

From the responses and patterns I observed, one recurring reason is fluidity. Many people say their film ratings change constantly in their heads. A movie that felt like a four yesterday might feel like a three-and-a-half next month. Repeatedly updating scores can become tedious, even exhausting. But the bigger issue seems to be perception. People worry — sometimes rightly so— that their ratings will be misinterpreted. For some, three stars is a solid, positive endorsement. For others, anything below four feels like a dismissal. That disconnect can spiral into unnecessary debates — or worse, online pile-ons.

Which brings me to what I like to call the comparison game.

This is where things get absurd. It’s when someone compares potatoes to lettuce. Sure, they both grow from the ground. They might share space on a burger plate. But beyond that? Completely different textures, flavors, and purposes.

Recently, I rated “Dhurandhar” four stars — the same score I gave “One Battle After Another.” A follower asked how I could possibly see those films as equals. But that’s the assumption baked into the comparison game: that identical ratings equal identical value. They don’t. One film might be a potato, the other a lettuce — or an apple. What do they meaningfully have to do with each other?

The root issue seems simple: people take their favorite art personally. If I love X and give it four stars, you’d better love it just as much — or at least rate it the “correct” way. Otherwise, the pitchforks come out. Disagreement isn’t just disagreement; it becomes a perceived attack.

And that’s where ratings shift from being shorthand expressions of personal taste to symbols people defend as if they were moral positions. In theory, a rating is just a snapshot of how something worked for one individual at one moment in time. In practice, it can feel like a referendum on identity.

Which says less about the numbers themselves — and more about how much we’ve invested in them.

When you rate a movie, do you stop and cross-reference every prior rating to ensure consistency across unrelated genres? The only time that kind of comparative calibration makes sense to me is within a contained body of work — ranking a director’s filmography, an actor’s performances, or entries in a franchise.

There are even stranger edge cases. I’ve given “The Room” a perfect score — not because it’s “objectively” great in a traditional sense, but because, for what it is, and what it accidentally achieves, it feels like a specific kind of perfection. Meanwhile, others might rate it a two-star disaster and still love it just as passionately. The number doesn’t always tell the whole emotional truth.

Now, for the positives.

As one commenter on the site put it, “rating forces us to confront the tough question: how much did this film really work for me?” A rating compels clarity. It forces you to distill your feelings into a decision.

In a way, this circles back to the heart-versus-stars debate. Clicking a heart on Letterboxd leaves a lot open to interpretation. Say you heart both “Dog Day Afternoon” and “12 Angry Men.” Great — but do you value them equally? Which one affected you more? Which one would you revisit first? Without a rating (or a detailed review), we’re left guessing.

And that ties into another undeniable reality: we’re living in a low-attention-span era. You can write a thoughtful, beautifully argued review — and many people simply won’t read it. On fast-scrolling platforms, especially, the rating becomes a kind of headline. A shorthand signal. It tells followers, at a glance, whether you found something worthwhile.

Conclusion

Personally, I’ll always champion ratings.

Yes, they’re a double-edged sword. They can flatten nuance, spark unnecessary outrage, or reduce complex feelings to a tidy number. But they can also serve a practical purpose — if we’re willing to understand how to read them. There’s probably an argument to be made that audiences need a bit more education on interpreting ratings as shorthand rather than gospel.

Some critics have come up with creative systems that embrace that shorthand in interesting ways. Roger Ebert and Gene Siskel boiled it down to the now-iconic thumbs metric — elegantly simple, instantly readable. Dan Murrell leans into a more textual breakdown, while Cody Leach blends a numbered score with contextual explanation. Different approaches, same goal: distilling a reaction into something digestible without (ideally) stripping it of meaning.

It’s not easy. The more you think about cinema as art — deeply personal, highly subjective — the more assigning it a number can start to feel reductive. For some critics, the very act of rating becomes a burden, as if they’re forced to quantify something that resists quantification.

Are ratings imperfect? Absolutely. Are they reductive? Sometimes. But they’re also efficient, clarifying, and — when used thoughtfully — a meaningful extension of the conversation rather than its replacement. In a media landscape built on quick takes and endless content, ratings function as a kind of necessary evil. They’re a snapshot, not the whole portrait. When used responsibly — and interpreted thoughtfully — they don’t have to replace the conversation. They can simply be the entry point to it.